A games developer with 18+ yrs' experience of building end to end products from prototyping to delivery. Includes AAA console, AR/VR/XR, Web and F2P mobile games for companies including EA, King and Snapchat.

Loves to learn, solve problems and challenges, be it technical, managerial or design related to create user impact and experiences.

Worked with cross discipline teams, both large and small, mentored team members and students and has managed multiple stakeholders to build cohesive project roadmaps.

Wants to create great products and have some fun along the way (๑˃ᴗ˂)ﻭ

Click on the covers below to find out more!

Harry Potter: Order of the Phoenix was my first job in the industry as a one of the Level Integrator leaning towards the technical side more than design.

My main role was to implement a lot of the meta-game features and design that encouraged the player to explore the world of Hogwarts in exchange for rewards such as video interviews with the cast and the concept images.

All the logic for the game object unique interactions and reactions were controlled through C++ ‘scripts’ which sounds unusual and had it’s fair share of pros and cons during development.

This project is where probably learned the most about programming in a large scale project as I was pretty lucky being in a team of veterans game developers where they encouraged us to ask questions and willing to answer in detail.

They taught me about the advantages of using component based architecture, importance of CI, multi-platform development and the importance on good tools and workflows. With me just coming out of University, this was a fantastic opportunity to learn as much as I could from everyone and their experiences.

During the latter half of the project, I started to be given more programmer orientated tasks as my lead knew that was the direction I wanted to be heading towards. I worked on extending the scripting logic API and providing programmer support for the rest of Integration team as the majority were from a design background which reduced the burden and disruptions on the main game development team.

Harry Potter: Half Blood Prince was my second title and was the start of my career as a gameplay programmer as was given the task of prototyping and implementing the Potions mini game. This was a huge responsibility as it was one of the three core game mechanics in Half Blood Prince that would be used repeatedly throughout the game.

This is where I really learned the importance fast prototyping and how it was more important to prove something worked then it was to do it ‘right’. For every new idea that came up for potions, I quickly cobbled a working version under guidance from my lead to see how well it worked.

Everything from the core mechanic, layout and camera angle were reworked multiple times, the Wii controls alone had 7 or 8 different versions before it went through focus testing with our target audience to see which worked best and more importantly why. The mini game incrementally improved throughout development through ‘baby steps’ and become one of the most polished features in the game.

From the GamesRader Wii Review: "Let's start with potions, as it's the Big New Thing in Half-Blood Prince. It's brilliant. ... Not having ever made a potion we can't judge how accurate it all is, but the gestures are spot-on and fun to perform - it's a truly great mini game."

One of the larger unexpected problems that cropped up late in development was running out memory for assets due to the sheer number of unique ingredients and recipes needed for potions and had to come up with a solution without any major system rewrite.

Seeing that the head and body memory slots were not used during potions, I organised the data each set of ingredients for a recipe to occupy one or two of these slots since only one recipe would only be loaded at any one time. This meant that only a small change to the initialisation stage of potions was needed to cater for the load.

Need for Speed: Shift was a bit of a departure from my previous gameplay orientated roles as I was asked to be a frontend programmer using a Flash based library on an existing engine.

As it was an old codebase and toolset, there were not many developers at the company that knew exactly how the pipeline worked to export the game data into the game beyond the fact that there were a bunch of batch files that ran the command line tools.

Through some trial and error and the use of tools like Process Explorer and WinMerge, we managed to work out which batch files to use for different sets of data and also added some extra error reporting in the scripts.

As the frontend still had all the graphics from an old game, I stripped all the screens down to their barebones ready for the artist to add new graphics and animations at a later stage.

The livery select and editing screens were my main contributions to the game dealing with logic, presentation, saving and loading to memory card and UMD, including dealing with the TRCs associated with files on external media.

My main role on this project was working on prototyping a two player version of an existing mini game on the Wii and changing the controls so it can be played with just the Wiimote as the game was focused on co-operative play. This didn’t take too long to get to a solid workable version and move onto tool support for the integrators and art outsourcers.

As the outsourcers were external, we had to be careful on what data they had access to reduce the risk of a game leak but still be able to test their work in-game. An external Perforce server was created for them to use that was a mirror of ours without any of the level data except for a blue room sandbox that I created.

Within this sandbox was the same lighting and effects setup as the rest of the levels and also access to a set of debugging tools that I implemented that allowed the user to play any animation on a selected model and also scrub through the frames to look possible possible problems with the exported data.

Other side work also included adding some features into the existing development tools with requests from the development team such as being able to build the game data and launch the game in one click and managing to speeding up Wii image build times after talking with a few other developers.

There were some rumours that it didn’t take as long on Windows 7 an given it was something that was easy to try, I ran some benchmarks with our existing setup on Windows XP against Windows 7. There was a 30%-50% reduction (!) simply from an OS change and after some research, this was due to the improved file caching in Windows 7.

Unfortunately, this project was cancelled during production and the team moved onto Create.

EA Create was my last game at EA and during that time, I was a support engineer for the team taking on tasks that were outstanding but didn't fit in any of the other developers specialised areas and focused my attention to keeping the team moving forward.

My main task was on monitoring and maintaining the daily code and data builds, dealing with any build issues that occur which were usually memory related. This meant that I was also maintaining investigating our memory usage in game which became more important as we moved from a level based streaming system to a dynamic loading on-demand per object system which I assisted another developer in integrating into the game.

I partnered with IT to have PCs ready for newcomers from the moment they arrive via creating a base image for the PCs which had the majority of tools installed. Once I was given the new PC, I would update the tools via our in-house auto-installer that I maintained and built the latest version of the game on all platforms.

A new starter could now start working on the game within 30 mins compared a day that it used to take waiting for everything to install and download.

WMS Gaming was my first job outside of the game industry and since worked on two video slot machine themes, Commanding Officer and Periscope Pays which was based on the Battleships IP from Hasbro. Initially, there were meant to be two programmers on the project (one per theme) but as another project was overrunning, I ended up being the main programmer on both themes and developing in parallel. Thankfully, both shared a common base code which helped reduce the workload.

Each theme had their own unique set of bonus features that are available to the player to trigger. As there isn't much depth to the games compared to console games, there is a heavy focus on the player experience such as how everything is presented and fed back to the player. As the Producer and Designer were at the head office in Chicago, we were constantly sending builds up for review and getting feedback during the early stages to ensure that we had the experience and game flow correct early on.

A lot of focus and testing is placed on the strict technical requirements that states and countries have for these games which centre around accuracy, reliability and accountability. Common test cases being able to return to the same state if the machine is power cycled and being able to look at the games history if a dispute is raised from the player.

During this time, I also developed two small tools to help with my largest time sink during bug fixing which was anything to do translated assets.

It was incredibly difficult to compare translated assets with each other and whether they were missing or not so Locale Detective was born. The tool allowed me to quickly scan and search assets in the data folder and see at a glance if a translated asset was missing or named incorrectly which saved me hours during the late phases of both games. The full readme can be found here.

Another point of frustration was the amount of manual work needed by the developer to send a build to the head office for review which required a clean code base on the developers machine, testing locally in the studio on device and then sending the build to the remote servers. This whole process could easily take up half a day, especially if the request for build came in the middle of task as it took some time to clean up the code to get into a playable state.

A couple of spare PCs and some lunchtimes later, I installed and maintained the first company's continuous integration server using Jenkins which performed static code analysis, build and deployed nightly builds of all active projects to the test devices in the studio. I also added a job that allowed anyone to send or retrieve a build from and to any office build server.

I joined Happy Finish to further widen my experience and knowledge in related interactive media industries. It was a much more client facing role than expected with frequent meetings (in person and over phone) providing progress updates and clarifying briefs/requests to further understand what their vision of the product.

The department's main aim was to create unique experiences using hardware like the Oculus Rift, Leap Motion and Kinect, pairing it with the CG work that is created in-house to create highly polished applications.

This included the Bazooka Budz Augmented Reality mobile app which I took ownership mid project and carried it through to release on both the Apple and Google Play Stores. Another was Happy Interactions which I oversaw as technical lead evaluating and experimenting with various hardware looking at what could be used pending the environment of the application.

We also experimented with ideas on what could be possible and creating proof of concept prototypes including a virtual showroom that the user could walk around using the Oculus Rift and the Kinect.

The interactive department was still relatively new and I was able to bring my industry experience to the team, mentoring the junior programmers, providing further feedback for usability within the apps, driving development processes and adapting them for a production driven company.

This was a challenge as the workflows that were in place and the managers were used to a linear schedule of production rather than a typical software iterative development cycle which made quotes difficult to calculate up front. Over time, I learned more about the process of the how the sales teams pitched ideas to prospective clients which helped frame my estimations in the correct context and highlight potential issues and/or provide further creative input to the pitches.

I returned to my roots at King, focusing on my previous experience as a Gameplay Programmer, augmenting it with King’s vast knowledge of making hundreds of casual games and learning more about their creative processes.

All the projects I have worked on were developed with small team, usually a programmer, artist and a designer. The focus was on high iteration of ideas and feedback to fine-tune game mechanics, so I would seek ways to reduce any bottlenecks when it came to making changes.

A major part of this was to focus on tools to change content early during the development phrase, so that the team could quickly iterate over ideas rather than manually manipulating data files.

I try to understand what the pipeline is like for others to use, listening to feedback and observing their usage to look for ways to make it easier and simpler to create or iterate over content. Some of the best tool features have allowed us to edit levels mid-game, undo previous moves and playback the game in slow motion, which have made features much easier to demo and debug without developer involvement.

With design, I always approach the implementation of the mechanics from the player’s view, identifying potential issues and improvements that are not always apparent during conception. This is fed back to the designer, usually before the implementation is finished and tweaked as necessary.

The focus on tools, along with the shorter feedback loop, has increased the speed of implementation of new features in our prototypes. The builds are sent to the management team for feedback and review; they have recognised our team’s turnover time as the fastest in the company.

I am also currently broadening and updating my skills with courses supplied by King. These include credited Agile courses and Scott Meyer’s Modern C++ training course, which has been useful in understanding the nuances in C++11.

Between May and July 2016, I was live streaming games development on Twitch to see if it was possible to generate enough revenue to sustain continuous game development.

I wanted to break out of the hit driven loop of games development and reduce the financial risk which traditionally comes with it.

Games development is ultimately a debt first process. They take time and/or money to create, and until they reach a playable, presentable state, crowd funding, early access and publishers are not an option.

It’s not even guaranteed that the game will earn back the cost of development. In a lot of ways, it’s a gamble and there are only so many bets that can be made before running out of time and money.

While I was brainstorming what I could do to minimise or mitigate the risk, Twitch.tv has started to focus on live streaming non-games such as cooking, art, music and more relevantly, games development and programming.

I knew that games streamers have become very popular with a quite a few making significant money much in the same way YouTubers have. However, at the time, I couldn’t imagine watching people developing games live has the same appeal.

Yet it looked like it could be an interesting way to solve the debt first issue mentioned above. What if the development process could be used to provide entertainment value and viewers could donate and/or subscribe to the channel?

Below are the links to a series of articles about my stream setup (both hardware and software) as well as my thinkings, research and the outcome of this experiment.

Although the company's core business was their WebGL engine and cloud based editor, they would also take on client work to help publicly showcase the engine's capabilities. I joined PlayCanvas primarily as a content creator to manage this work and allow the core technology engineers to focus on improving the engine and tools.

My first project was for Miniclip to create a promotional title (Virtual Voodoo) for Halloween within 5.5 weeks, where I was the project lead and developer with a dedicated 3D artist.

Due to the short deadline, the focus was on creating a shippable MVP and then build layers of functionality to guarantee a release. Builds were sent to Miniclip early and frequently to ensure that they were happy with the game's direction and to identify any issues as early as possible. Reception to the game was positive with an average rating of 4/5 on Miniclip.

In-between client work, I expanded the tutorials section with over 30 project samples to help build a knowledge base for users. As a team, we identified common functionality that users would want to implement and frequently asked questions to build a priority list of samples to create.

My final project was to create an experience that showcased the potential of VR through the browser using WebVR. The primary goal was to create a 'responsive' VR experience that had the same functionality no matter the platform.

The user could be on a Google Cardboard, a Google Daydream or even using full room scale VR setups; all the functionality of the experience is accessible. I had to cater for the lower end without sacrificing the fidelity and experience of controls like the Oculus Touch.

Google showcased the project on their blog for their Chrome 56 release announcing native support for WebVR quoting:

"WebVR allows developers to build an experience that scales across all VR platforms from Google Cardboard and Daydream to desktop VR headsets, while also supporting 2D displays. Different platforms have different capabilities and the PlayCanvas WebVR Lab project gives developers an example of how to manage that diversity." Megan Lindsay, Google Product Manager for WebVR

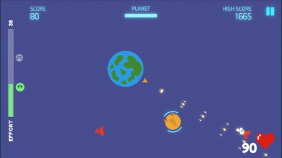

Joining Beat Fitness Games (BFG) gave me the opportunity to be at a company that was in a very early stage and with a novel angle and audience. The founder (Gareth Davies), created an algorithm that could measure user 'effort' during exercise with data from an off the shelf heart rate monitor.

The concept was to create a series of games that would use it to motivate the user during cardio exercise, typically a boring exercise routine in the gym.

I was the only developer for the first 12-ish months of the company which was a new experience for me. I was excited at the prospect of being able to decide and architect a game and use workflows the way I thought would be best, it also came with the realisation that I had to do *everything*. The challenge of balancing technical demands/debt and pushing through gameplay in the games themselves was something that I struggled with and towards the end of my role, went with more of a gameplay first methodology as we needed product first and foremost.

The first task was to refactor the founder's prototype game project into a game and a separate core library that would handle the connection to the heart rate monitor and the algorithm that calculated the effort of the user.

This allowed us to iterate on the algorithm independently and also be able to quickly prototype new ideas and drop in the library to existing projects. For example, we wanted to try a runner style game and used Unity's Trash Dash project as the prototype base. The technical implementation of the project with the core library took very little time and had a working demo on device within the hour.

Over the course of the prototypes, I iterated over a one click build pipeline that would create, update version numbers, sign APKs, etc to make build creation much easier and less error prone. I also integrated a debug system that allowed us to easily tweak constants for gameplay mechanics during gameplay as a lot of user testing was done in a gym environment and also to be able demo/test the game without exercising.

We wanted to keep things in the 'Unity way' as much as possible and use the architecture pattern with scriptable objects shown in this talk by Ryan Hipple. It worked well enough as a way to decouple Game Objects from each other without too much boilerplate code.

Documentation was something that I strived to keep up to date as I knew I wouldn't be able to keep everything in my head at once and in the future, would like to grow the team. I created test plans for each of our games and the core library, run sheets for deploying to Google Play Store, the Build System and adding In App Purchases, analytics etc.

The major technical challenge was heart rate lag, the user could be exerting a large amount of effort, but it would take time for the heart rate to start pumping as fast as it needs to. This meant that reaction based titles purely based on the heart rate monitor data was not possible and had to design the game mechanics around this. Examples would be: a charge mechanic or giving the user time to react to an incoming threat/obstacle.

We also focused a lot of time in each prototype to looking at what made the user feel good when exerting effort (especially towards the top end) which relied on a combination of visuals and game mechanics to balancing the curve from 'perceived effort' to 'output' in the game itself.

We ended up with two titles that are (at time of writing) with test groups, Dynamo Dash, a puzzle based game that just uses the effort calculation to control actions and Dynamo Defender, an arcade shooter where the user is required hold the phone to move the ship and the effort is linked firepower.

My motivation for taking on a teaching role was to challenge myself and develop my soft skills, to mentor and teach my peers. I also wanted to see the challenges that both Universities and students are currently facing given the changes in education and work landscape since I was a student.

ACM offered a part-time role (2 days a week) as a sessional tutor, teaching the programming discipline for the 1st and 2nd years. As it was an accredited course from Falmouth, I had existing course material and assignments to adapt to work with ACM's resources and multi discipline course style. This meant focusing on Unity over Unreal and keeping the course focused on a single programming language as much as possible.

Unfortunately, the 1st years that were looking to join the programming discipline decided last minute not to enrol. Instead, I provided Unity and programming support, taught a set of Intro to Programming lessons to any student at ACM that wished to learn and taught several lessons for the Game Design discipline.

I decided to leave this role after a semester in order to focus on a new opportunity in Project Management at Bravo Co where I was part-time before.

I started at Bravo Co as Senior Developer (part-time) with an agreed intention that we would work on transitioning me to a management role over time.

This was during two major feature releases between two development teams, a UI code architecture refactor and the implementation of the shop. I was fixing bugs while updating documentation for new developers and as time went on, it was clear that being part-time prevented me from being able to be fully involved in the team and product.

When the opportunity to fill the Project Manager (PM) role as our current PM was leaving, I decided to drop the ACM tutor role and fully commit to being a PM.

Over the next few weeks, I shadowed the PM worked together to create a set of handover documents of processes, useful links and gain sense of how the different teams are communicating and getting involved with her. Also, during this time, I talked and listened to members of the team to understand their needs, frustrations, concerns and workflows. I later reviewed retrospective reports to look for correlation and to see if there has been any actions taken.

Communication between different teams and leadership was a common trend that came up and observed during my time as a Software Developer. Often a discussion was had between a group of people and the decision is then given to a team to carry out without context or being involved at all.

We had a Slack #po-feedback channel before to allow the team to ask questions but wasn't really used. I started using it to bubble up questions or decisions that have been made in a private group to the rest of the team and would 'poke' specific people for feedback and/or confirmation.

I also created a Project Dashboard Confluence page which contained high level information about upcoming releases and content, progress, expected release dates, links to relevant design specifications and Slack discussion channels about key features. The aim was to create a one-page project 'hub' so that anyone that wanted to know about the current state of the project could go there and get to the relevant content. It made use of JIRA filters to keep track of bugs (won't fix, fixed that were found in a previous release, etc) as well as general high level progress of stories.

This replaced a daily report that used to be complied manually and sent out to the team which saved on some day to day admin work while delivering the information that the whole studio wanted to have. I would send out periodic, studio wide messages on Slack on the current progress as a 'broadcast' to cater for people that prefer information being sent to them. Especially if a change to the plan has been made or if something has started to slip.

JIRA is the company's choice of management tool and as I was the only project manager, I wanted to reduce the amount of time managing JIRA itself. I decided in the short term to focus on 'Releases' feature in JIRA and scoping in stories based on a prioritised backlog, which in this case was as simple as adding a Fix Version.

Managing JIRA was now simply a case of backlog refinement with the leadership team and standups around the release story listings.

I was lucky to have an experienced team who worked well together and could self manage which gave me the confidence to try different practises in agile including #NoEstimates. In one case where we had a fixed deadline, instead of estimating each story, asked if they could complete the proposed release stories in the timeline. If not, what would we have to remove from the release that would make it feel like it would be achievable.

This whole process took around 5-10 mins, and they managed to complete ~95% of their estimate.

We still had to go through each ticket to explain the work was needed which took longer than I would like and currently working on a few ideas on how that be mitigated going forward.

My largest challenge was managing the stakeholders and have them discuss and commit to a clear direction for the studio. This was particularly difficult due to the size of the leadership team and assumptions being made on what was agreed. Sometimes this required drilling down for details and/or breaking down the different components to ensure that we were all understanding the same thing.

There was also a focus from me to get to the 'why' and be able to give some purpose to the work given by the team. When I joined, there was a feeling of 'top down' leadership with little autonomy. I'm a big believer of giving teams room to work and wanted more of a 'bottom up' flow. For that to happen, they need to have purpose from the studio to give focus for the work.

This goal of a team driven product and process is still a work in progress and aiming initially for baby steps towards this. A lot of the concepts and ideas will come from Pixar's and Basecamp's culture and workflows who are very open to how they work and the lessons they've learned over time.

Unfortunately, the role was made redundant due to an investment round falling through.

As the Partner Relations Manager for PlayCanvas and Snap Games, I aimed to provide a level of support, responsiveness and engagement that surpasses expectations from any games engine provider. This lead to a 100 NPS score over the 30 day average with our paid users.

The services provided include:

With the increased multiple points of contact across Slack, forums, email and social media, I maintained a issue tracker for support that collates and centralises all queries into a single queue. This allowed me and the team to triage and prioritise more easily to provide better expectations to our users.

Even with our userbase doubling between 2020-22, PlayCanvas was continuously praised for level and quality of support that I provided.

The issue tracker also acts as the central hub for all documentation, notes and links to relevant information such metrics, game pitch documents, etc. This allowed anyone on team to quickly access the information they need if they need to take on a support query and more importantly, allow someone to take over in my absence.

Some of the queries from users had also resulted into prototype work becoming fully supported features such as exporting projects into a Facebook Playable Ad format.

As the team is still relatively small, I'd also tried 'work myself out a job', and looked for ways to reduce the amount of support queries that come in so that the quality of support can be scaled. This has included:

Due to be updated!

Senior Web Rendering Engineer with Phaser WebGL Games Engine

Here are a collection of notebooks and favourite blog posts that I've been keeping when learning new technologies, workflows and solving problems.

They are used as a personal knowledge base and reference guide to cement what I've learned. They have since been made public to share and help others.